How Microsoft’s bet on Azure unlocked an AI revolution

About five years ago, artificial intelligence research organization OpenAI pitched Microsoft on a bold idea that it could build AI systems that would forever change how people interact with computers.

At the time, nobody knew it would mean AI systems that create pictures of whatever people describe in plain language or a chatbot to write rap lyrics, draft emails and plan entire menus based on a handful of words. But technology like this was possible. To build it, OpenAI needed computing horsepower – on a truly massive scale. Could Microsoft deliver it?

Microsoft was decades into its own efforts to develop AI models that help people work with language more efficiently, from the automatic spell checker in Word to AI tools that write photo captions in PowerPoint and translate across more than 100 languages in Microsoft Translator. As these AI capabilities improved, the company applied its expertise in high-performance computing to scale up infrastructure across its Azure cloud that allowed customers to use its AI tools to build, train and serve custom AI applications.

As AI researchers started using more powerful graphics processing units, known as GPUs, to handle more complex AI workloads, they began to glimpse the potential for much larger AI models that could understand nuances so well they were able to tackle many different language tasks at once. But these larger models quickly ran up against the boundaries of existing computing resources. Microsoft understood what kind of supercomputing infrastructure OpenAI was asking for – and the scale that would be required.

“One of the things we had learned from research is that the larger the model, the more data you have and the longer you can train, the better the accuracy of the model is,” said Nidhi Chappell, Microsoft head of product for Azure high-performance computing and AI. “So, there was definitely a strong push to get bigger models trained for a longer period of time, which means not only do you need to have the biggest infrastructure, you have to be able to run it reliably for a long period of time.”

In 2019, Microsoft and OpenAI entered a partnership, which was extended this year, to collaborate on new Azure AI supercomputing technologies that accelerate breakthroughs in AI, deliver on the promise of large language models and help ensure AI’s benefits are shared broadly.

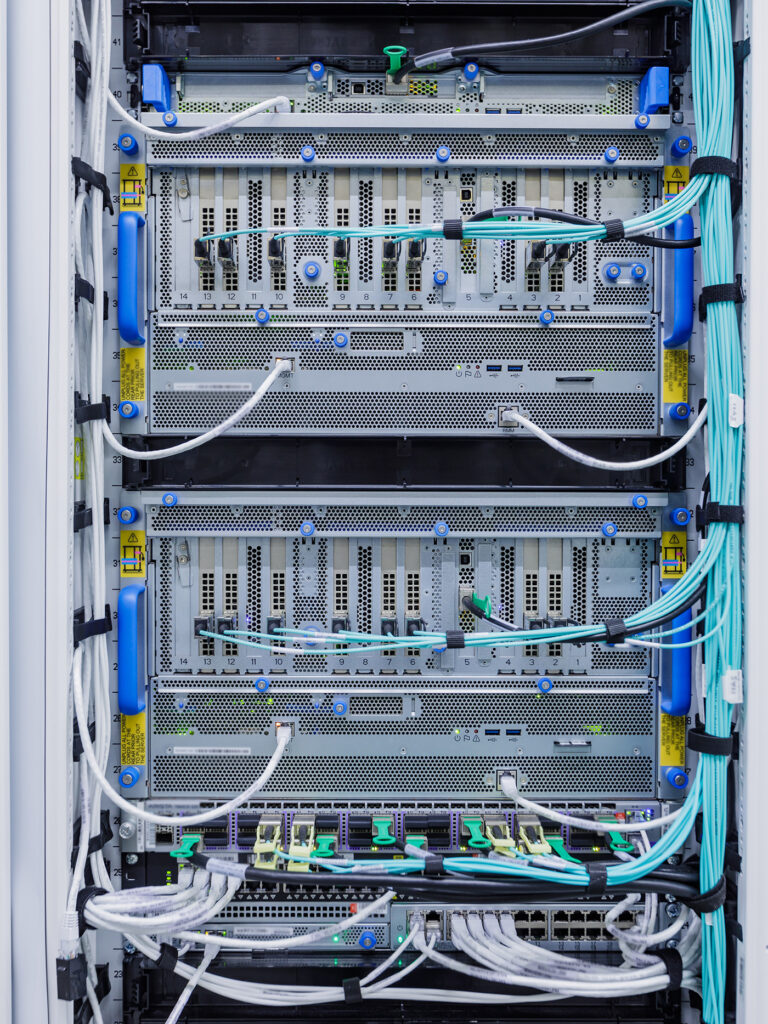

The two companies began working in close collaboration to build supercomputing resources in Azure that were designed and dedicated to allow OpenAI to train an expanding suite of increasingly powerful AI models. This infrastructure included thousands of NVIDIA AI-optimized GPUs linked together in a high-throughput, low-latency network based on NVIDIA Quantum InfiniBand communications for high-performance computing.

The scale of the cloud-computing infrastructure OpenAI needed to train its models was unprecedented – exponentially larger clusters of networked GPUs than anyone in the industry had tried to build, noted Phil Waymouth, a Microsoft senior director in charge of strategic partnerships who helped negotiate the deal with OpenAI.

Microsoft’s decision to partner with OpenAI was rooted in conviction that this unprecedented infrastructure scale would yield results – new AI capabilities, a new type of programming platform – that Microsoft could transform into products and services that offer real benefit to customers, Waymouth said. This conviction fueled the companies’ ambition to overcome any technical challenges to build it and to continue to push boundaries on AI supercomputing.

“That shift from large-scale research happening in labs to the industrialization of AI allowed us to get the results we’re starting to see today,” he said.

This includes search results in Bing that piece together a dream vacation, the chatbot in Viva Sales that drafts marketing emails, GitHub Copilot that draws context from software developers’ existing code to suggest additional lines of code and functions, removing drudgery from computer programming, and Azure OpenAI Service, which provides access to OpenAI’s large language models with the enterprise-grade capabilities of Azure.

“Co-designing supercomputers with Azure has been crucial for scaling our demanding AI training needs, making our research and alignment work on systems like ChatGPT possible,” said Greg Brockman, president and co-founder of OpenAI.

Microsoft and its partners continue to advance this infrastructure to keep up with increasing demand for exponentially more complex and larger models.

For example, today Microsoft announced new powerful and massively scalable virtual machines that integrate the latest NVIDIA H100 Tensor Core GPUs and NVIDIA Quantum-2 InfiniBand networking. Virtual machines are how Microsoft delivers to customers infrastructure that can scale to size for any AI task. Azure’s new ND H100 v5 virtual machine provides AI developers exceptional performance and scaling across thousands of GPUs, according to Microsoft.

Large-scale AI training

The key to these breakthroughs, said Chappell, was learning how to build, operate and maintain literally tens of thousands of co-located GPUs connected to each other on a high-throughput, low-latency InfiniBand network. This scale, she explained, is larger than even the suppliers of the GPUs and networking equipment have ever tested. It was uncharted territory. Nobody knew for sure if the hardware could be pushed that far without breaking.

To train a large language model, she explained, the computation workload is partitioned across thousands of GPUs in a cluster. At certain phases in this computation – called allreduce – the GPUs exchange information on the work they’ve done. An InfiniBand network accelerates this phase, which must finish before the GPUs can start the next chunk of computation.

“Because these jobs span thousands of GPUs, you need to make sure you have reliable infrastructure, and then you need to have the network in the backend so you can communicate faster and be able to do that for weeks on end,” Chappell said. “This is not something that you just buy a whole bunch of GPUs, hook them together and they’ll start working together. There is a lot of system level optimization to get the best performance, and that comes with a lot of experience over many generations.”

The system level optimization includes software that enables effective utilization of the GPUs and networking equipment. Over the past several years, Microsoft has developed software techniques that have grown the ability to train models with tens of trillions of parameters, while simultaneously driving down the resource requirements and time to train and serve them in production.

Microsoft and its partners also have been incrementally adding capacity to the GPU clusters, growing the InfiniBand network and seeing how far they can push the datacenter infrastructure required to keep the GPU clusters operating including cooling systems, uninterruptible power supply systems and backup generators, noted Waymouth.

“The reason it worked is because we were building similar systems for our internal teams and there are complementary elements there,” he said. “But the scale at which we were doing it with OpenAI was simply much larger either internally or with external partners.”

Today, this Azure infrastructure optimized for large language model training is available via Azure AI supercomputing capabilities in the cloud, said Eric Boyd, Microsoft corporate vice president for AI Platform. This resource provides the combination of GPUs, networking hardware and virtualization software required to deliver the compute needed to power the next wave of AI innovation.

“We saw that we would need to build special purpose clusters focusing on enabling large training workloads, and OpenAI was one of the early proof points for that,” Boyd said. “We worked closely with them to learn what are the key things they were looking for as they built out their training environments and what were the key things they need.”

“Now, when other people come to us and want the same style of infrastructure, we can give it to them because that’s the standard way we do it,” he added.

AI for everyone

Early in Microsoft’s development of AI-optimized cloud-computing infrastructure, the company focused on specialized hardware to accelerate the real-time calculations AI models make when they are deployed for task completion, which is known as inferencing. Today, inferencing is when an AI model writes the first draft of an email, summarizes a legal document, suggests the menu for a dinner party, helps a software programmer find a piece of code, or sketches a concept for a new toy.

Bringing these AI capabilities to customers around the world requires AI infrastructure optimized for inferencing. Today, Microsoft has deployed GPUs for inferencing throughout the company’s Azure datacenter footprint, which spans more than 60 regions around the world. This is the infrastructure customers use, for example, to power chatbots customized to schedule healthcare appointments and run custom AI solutions that help keep airlines on schedule.

As trained AI model sizes grow larger, inference will require GPUs networked together in the same way they are for model training in order to provide fast and cost-efficient task completion, according to Chappell. That’s why Microsoft has been growing the ability to cluster GPUs with InfiniBand networking across the Azure datacenter footprint.

“Because the GPUs are connected in a faster network, you can fit larger models on them,” she explained. “And because the model communicates with itself faster, you will be able to do the same amount of compute in a smaller amount of time, so it is cheaper. From an end customer point of view, it’s all about how cheaply we can serve inference.”

To help speed up inferencing, Microsoft has invested in systems optimization with the Open Neural Network Exchange Runtime, or ONNX Runtime, an open-source inferencing engine that incorporates advanced optimization techniques to deliver up to 17 times faster inferencing. Today, ONNX Runtime executes more than a trillion inferences a day and enables many of the most ubiquitous AI powered digital services.

Teams across Microsoft and Azure customers around the world are also using this global infrastructure to fine-tune the large AI models for specific use cases from more helpful chatbots to more accurate auto-generating captions. The unique ability of Azure’s AI optimized infrastructure to scale up and scale out makes it ideal for many of today’s AI workloads from AI model training to inference, according to Boyd.

“We’ve done the work to really understand what it’s like to offer these services at scale,” he said.

Continuing to innovate

Microsoft continues to innovate on the design and optimization of purpose-built AI infrastructure, Boyd added. This includes working with computer hardware suppliers and datacenter equipment manufacturers to build from the ground up cloud computing infrastructure that provides the highest performance, highest scale and the most cost-effective solution possible.

“Having the early feedback lessons from the people who are pushing the envelope and are on the cutting edge of this gives us a lot of insight and head start into what is going to be needed as this infrastructure moves forward,” he said.

This AI-optimized infrastructure is now standard throughout the Azure cloud computing fabric, which includes a portfolio of virtual machines, connected compute and storage resources optimized for AI workloads.

Building this infrastructure unlocked the AI capabilities seen in offerings such as OpenAI’s ChatGPT and the new Microsoft Bing, according to Scott Guthrie, executive vice president of the Cloud and AI group at Microsoft.

“Only Microsoft Azure provides the GPUs, the InfiniBand networking and the unique AI infrastructure necessary to build these types of transformational AI models at scale, which is why OpenAI chose to partner with Microsoft,” he said. “Azure really is the place now to develop and run large transformational AI workloads.”

Related:

Learn more about Microsoft Azure

Read: Microsoft announces new supercomputer, lays out vision for future AI work

Read: Reinventing search with a new AI-powered Bing and Edge, your copilot for the web

Read: How AI makes developers’ lives easier, and helps everybody learn to develop software

Top image: The scale of Microsoft datacenters provides the infrastructure platform for many AI advances, including Azure OpenAI Service and the models that power Microsoft Bing. Photo courtesy of Microsoft.

John Roach writes about research and innovation. Connect with him on LinkedIn.