When Michael Collins peeked out a portal on the Apollo 11’s command module, orbiting the moon alone while Neil Armstrong and Buzz Aldrin made their historic lunar walk below, he saw a blue and white planet with no borders. It struck him that humans would have a better future if political leaders also could see the world that way — as a whole globe — and learn to collaborate.

But he wouldn’t be able to share his now-famous musings with folks back home until he set foot on Earth again, in part due to severely limited connectivity between the spaceship and ground control.

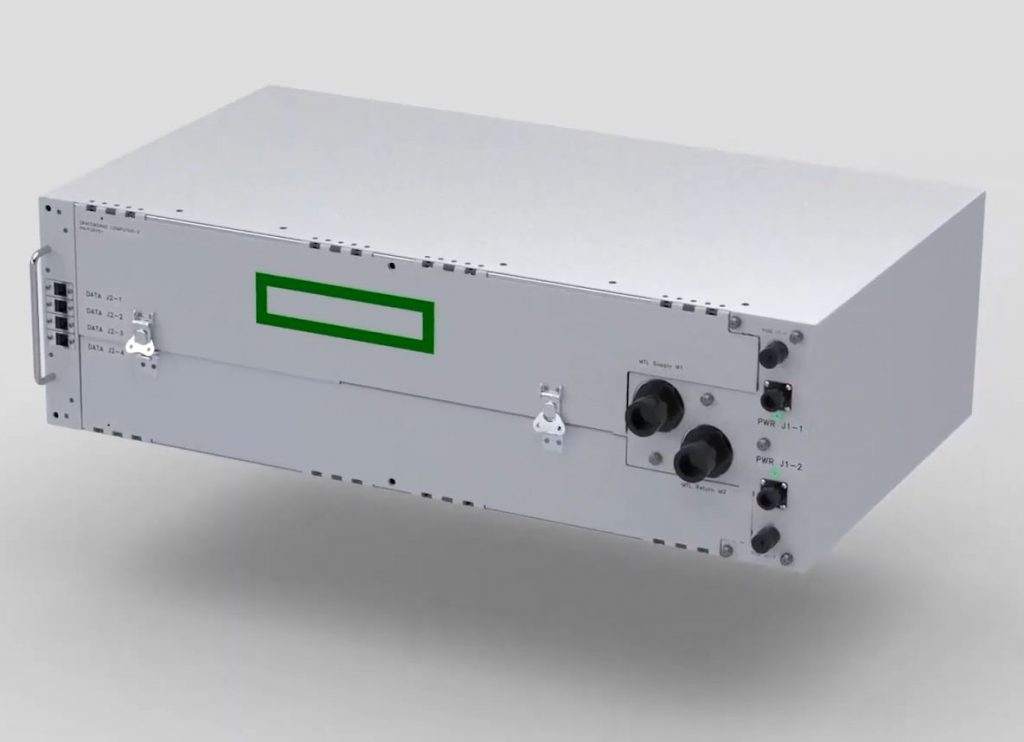

HPE’s Spaceborne Computer-2 is about the size of a microwave oven (Photo by NASA)

Software engineers and researchers across companies are working together to change that with a new partnership that’s improving communication and enabling experiments that will propel astronauts further into space while improving the lives of the earthbound as well. It’s all based on a new platform involving a supercomputer the size of a small microwave oven that’s getting linked to the cloud from space.

“I grew up with space on the nightly news, and now we’re back again with a new space race, powered by new technology,” says Christine Kretz, vice president of programs and partnerships at the International Space Station U.S. National Laboratory. New, reusable rockets are making space exploration more affordable and opening it up to more players, she says, “and that’s the new space. It’s tearing down rivalries and divisions.”

Kretz’s organization has been directed by NASA, through Congress, to manage the U.S. National Laboratory onboard the International Space Station (ISS), and it’s her job to hunt for groups — from universities to startups to tech giants — that will “make the best possible use of technology for this spacecraft that has become a floating laboratory circling the globe.”

Removing gravity from the equation has made a huge difference for scientists researching everything from combustion engines to air purification systems to cancer treatments. In its 21 years of human occupancy, the space station has hosted more than 3,000 experiments by more than 4,000 researchers from more than 100 countries.

With hundreds more ideas in the works, scientists need solid infrastructure and connection to run and access their experiments. That’s what a new partnership between Hewlett Packard Enterprise (HPE) and Microsoft aims to provide, with edge computing and the Azure cloud.

Until now, research data collected from the space station has been transmitted down in drips and drops because of competing priorities for the limited connectivity available. By the time researchers got their data, it was too late to make any necessary tweaks to the collection process or to react to any surprises that might pop up outside the influence of gravity.

That constrained connectivity also can delay communicating critical decisions to the astronauts, who often have to wait for information to reach ground control and be analyzed there and then returned with the necessary insight.

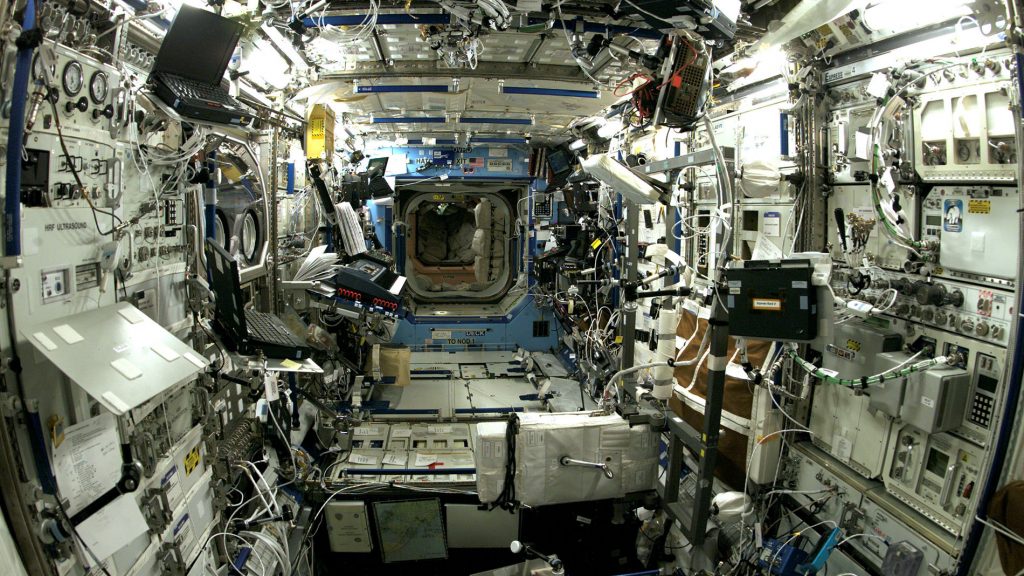

The Destiny Module inside the International Space Station, where astronauts perform many of the experiments (Photo by NASA)

That’s hard enough with the space station, which orbits as high as 250 miles above the earth. But the moon is almost a thousand times further than that. And at its farthest orbiting position, Mars is a million times that distance. So missions further into outer space will need stronger computing power at the astronauts’ fingertips and a better pipeline for sharing information.

HPE was already designing supercomputers for NASA to use for the heavy computing required to plan missions. So the company took one of the hundreds of high-performance computing servers that comprise a supercomputer, made sure it could fit in a rocket, and then tested it to see if it could survive the shaking and rattling of a launch, be installed by untrained personnel, and function in space, where stray cosmic rays can flip a computer’s 1s into 0s or vice versa and wreak havoc on the system.

It worked.

And now the second iteration — Spaceborne Computer-2, sent up to the ISS in February — incorporates the proven approach of the first mission, with a significantly more advanced system that’s purposely engineered for harsh environments and for processing artificial intelligence and analytics, says Mark Fernandez, HPE’s principal investigator for the project.

Spaceborne Computer-2 is powerful enough to do the work of analyzing data at the source of collection — right there in space — with a process called edge computing. It’s as if your hand normally sent information to your brain and had to wait for analysis and response before giving the signal to pull back from a hot stove, and then it suddenly got the ability to analyze the temperature right at the fingertip itself and decide to immediately recoil from the heat.

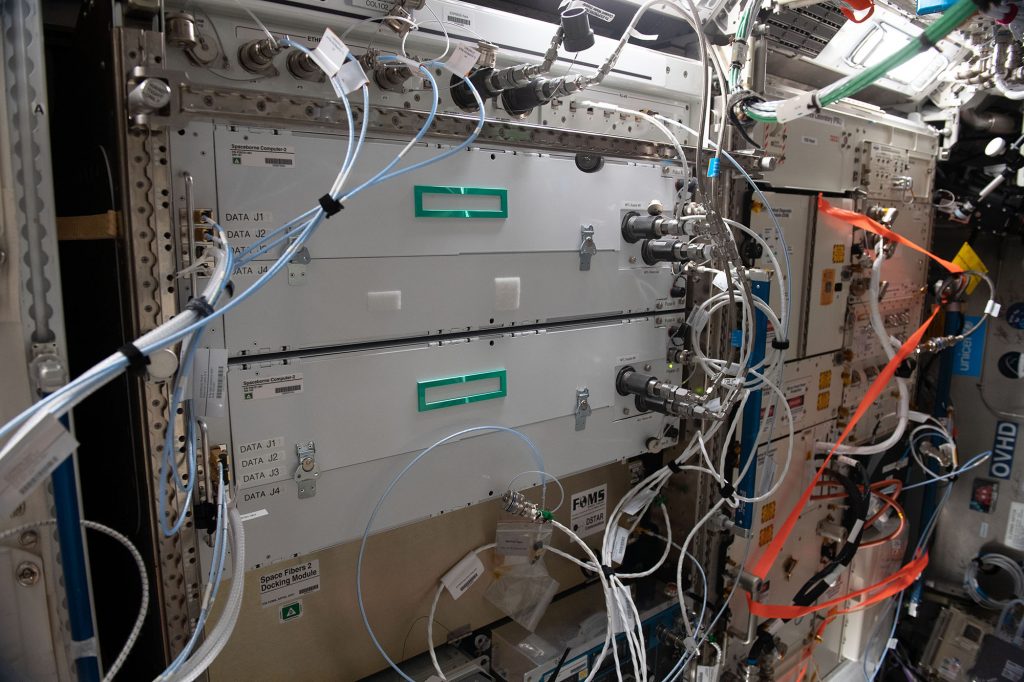

The Spaceborne Computer-2 on the International Space Station (Photo by NASA)

There are hundreds of instruments on the space station, some that collect data constantly and some that require video to be sent down frequently. Reducing the amount of data that has to be transmitted frees up that stream for more scientific experiments.

Still, lengthier computations must be sent down to Earth for help.

One of the experiments the partners are undertaking, for example, addresses the needs of healthcare for astronauts on longer space missions. The effects on a human body of lengthy sojourns in space aren’t fully known, making technology that allows frequent monitoring of changes over time especially important.

So the experiment tests whether astronauts can constantly monitor their health amid the potential danger of additional radiation exposure onboard a spacecraft, which could prove even riskier when a planet like Mars is a seven-month journey away from medical treatment. Astronauts in the experiment download their genomes and analyze them for anomalies. Those then get compared to the National Institute for Health’s database to find out whether there are any new mutations, and if those are benign and the mission can continue, or if they’re ones often linked to cancer that may require immediate care back on Earth. It’s the ultimate test of telemedicine that’s being eyed for remote locations around the world as well.

Space is going through this major transformation period.

But sequencing a single human genome, about 6 billion characters, generates about 200 gigabytes of raw data, and the Spaceborne Computer-2 is only allotted two hours of communication bandwidth a week for transmitting data to Earth, with a maximum download speed of 250 kilobytes per second. That’s less than 2 gigabytes a week — not even enough to download a Netflix movie — meaning it would take two years to transmit just one genomic dataset.

“It’s like being back on a dial-up modem in the ‘90s,” says David Weinstein, a principal software engineering manager for Microsoft’s Azure Space division, which was created last year to support those already in the space sector while also drawing new entrants by integrating its cloud with other companies’ satellite platforms.

So Weinstein’s team developed the idea to flip what many organizations do now when they run out of room for computations within their own computer systems and “burst” the overflow up to the cloud temporarily — it’s the same pattern, he says, just bursting down to the cloud from space instead. When the space station runs out of computing room during an experiment, it will automatically burst down into the huge network of Azure computers to get help, connecting space and Earth to solve the problem in the cloud.

Spaceborne Computer-2 can scour the data onboard, following code written by engineers to find events or anomalies that need extra scrutiny — such as mutations, in the case of the genome experiment — and then it can burst just those bits down to Earth and into Azure, instead of laboring to send the billions. From there, scientists anywhere in the world can use the power of cloud computing to run their algorithms for analysis and decisions, accessing millions of computers running in parallel and linked by 165,000 miles of fiberoptic cables connecting Azure data centers scattered throughout 65 regions around the globe.

Fernandez recalls hearing about frustrations from one researcher that it would take months to get her data from the space station. He offered to help, and the Spaceborne Computer processed her dataset in six minutes, compressed it and then downloaded a file that was 20,000 times smaller, he says.

“So we went from months to minutes,” Fernandez says. “And that’s when the lightbulb went off.”

Speed is even more important because Congress has only authorized a budget for the space station through 2024.

“We’ve got to get as much as we can done in the time we have left,” Kretz says.

HPE has completed four initial experiments so far — including bursting data down to the Microsoft cloud, with a successful “hello world” message — and has four others underway and 29 more in the queue, Fernandez says. Some of the tests have to do with healthcare, such as the genome experiment and transmitting sonograms and X-rays of the astronauts. Others are in life sciences, such as analyzing the crops grown onboard for longer missions to determine if an odd-looking potato is just deformed due to the lack of gravity — a new variable in biology — or is infected with something and needs to be destroyed. And still others deal with space travel and satellite-to-satellite communication.

Space activity and exploration have a huge impact on daily life on Earth, whether it’s broadband internet or GPS signals from satellites that provide navigation as well as timing signals, such as those for financial networks so a driver can run their credit card at a gas pump. And the constraints of spacecraft, which are getting smaller and more powerful but still have to be lightweight and fully self-contained, are forcing engineers and developers to rethink assumptions about their designs.

That’s prompting innovations that lead to breakthroughs in applied science with a variety of uses back on ground, says Steve Kitay, who was the deputy assistant secretary for space policy for the U.S. Department of Defense before joining Microsoft last year to lead Azure Space. And the impact has ballooned since SpaceX and others have introduced new rockets, he says, including some that can be reused, massively reducing the cost to launch them.

We’re back again with a new space race, powered by new technology.

“Space is going through this major transformation period,” Kitay says. “It has historically been an environment dominated by major states and governments, because it was so expensive to build and launch space systems. But what’s happening now is rapid commercialization of space that’s opening up new opportunities for many more actors.”

The novel use of open-source software, with code that’s public for any programmer to develop and customize, has made it easier to build programs that can run in the space station, says Glenn Musa, a senior software engineer for Azure Space. And since Spaceborne Computer-2 has an Azure connection with the same off-the-shelf tools and languages as computers on Earth, he says, developers “no longer have to be special space engineers or rocket scientists” to build apps for the ISS but can do so with the skills of a high school computer science student.

Those working on space exploration today “were born too late for the space race of the last century but too early to venture out into space in our own rocket ships,” Musa says. “But once we’re able to connect devices from space to the computers we have here on Earth, we open up a big sandbox, and we can all be part of experimentation in space and developing new technologies that we’ll use in the future.

“This wasn’t possible before, but now the possibilities are endless.”

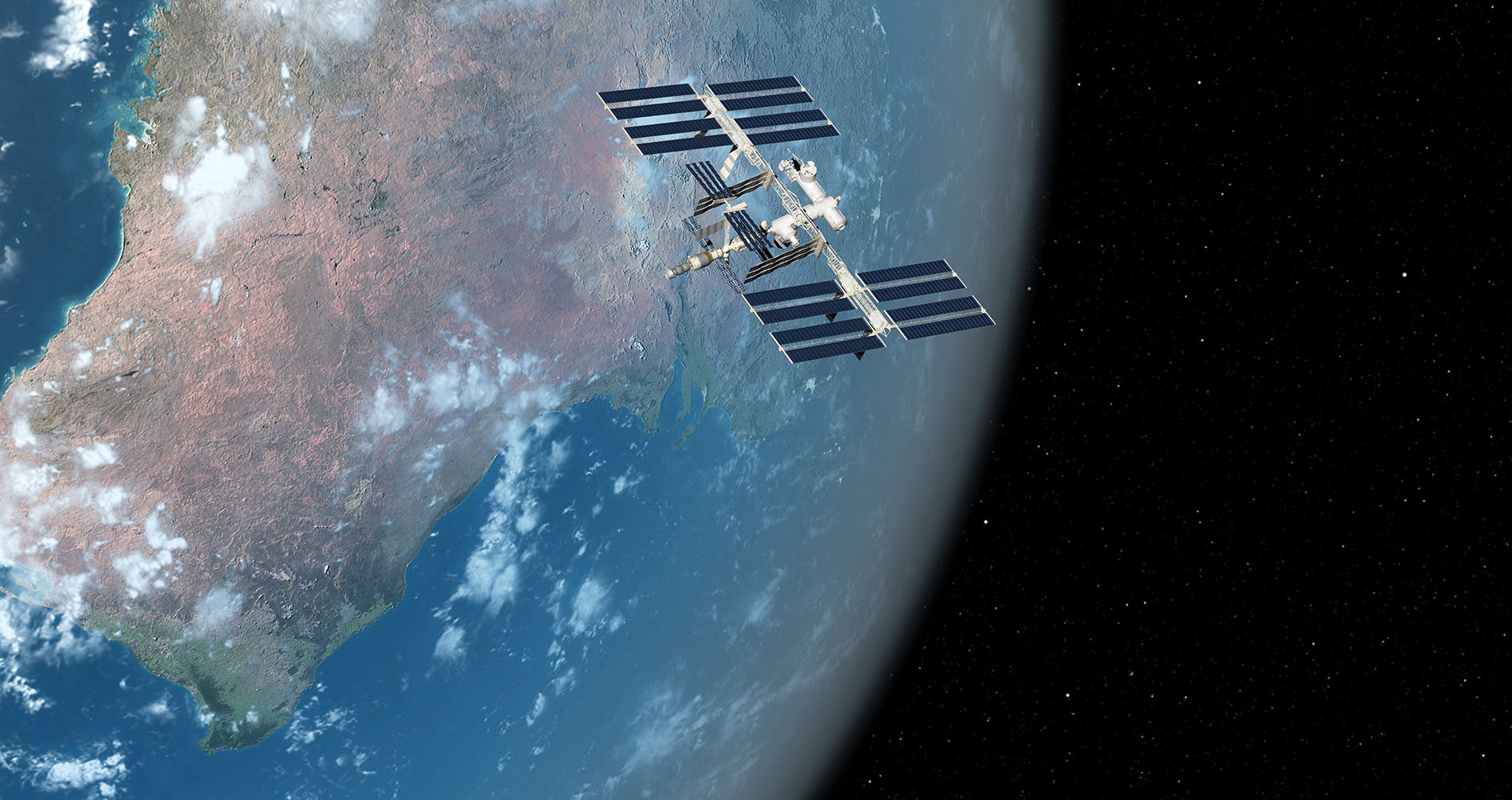

Top photo: An illustration of the International Space Station above Earth (Image by SciePro/Getty Images)