Microsoft’s virtual datacenter grounds ‘the cloud’ in reality

What is “the cloud”?

It’s where remote learners and workers gather for virtual lessons and meetings, where gamers converge to build worlds, race cars and blast away foes, and where healthcare workers have been keeping track of COVID-19 patients and the resources they need to treat them.

It’s also where companies quickly and securely respond to customers’ needs and where conglomerates streamline communications among dispersed employees and manage supply chain logistics for product portfolios.

But, nuts and bolts, what is “the cloud”?

“It is not nebulous magic, nor is it a single supercomputer,” a narrator explains in an introductory video to a new microsite that takes you on a virtual tour of a Microsoft datacenter. “The cloud is a globally interconnected network of millions of computers in datacenters around the world that work together to store and manage data, run applications and deliver content and services.”

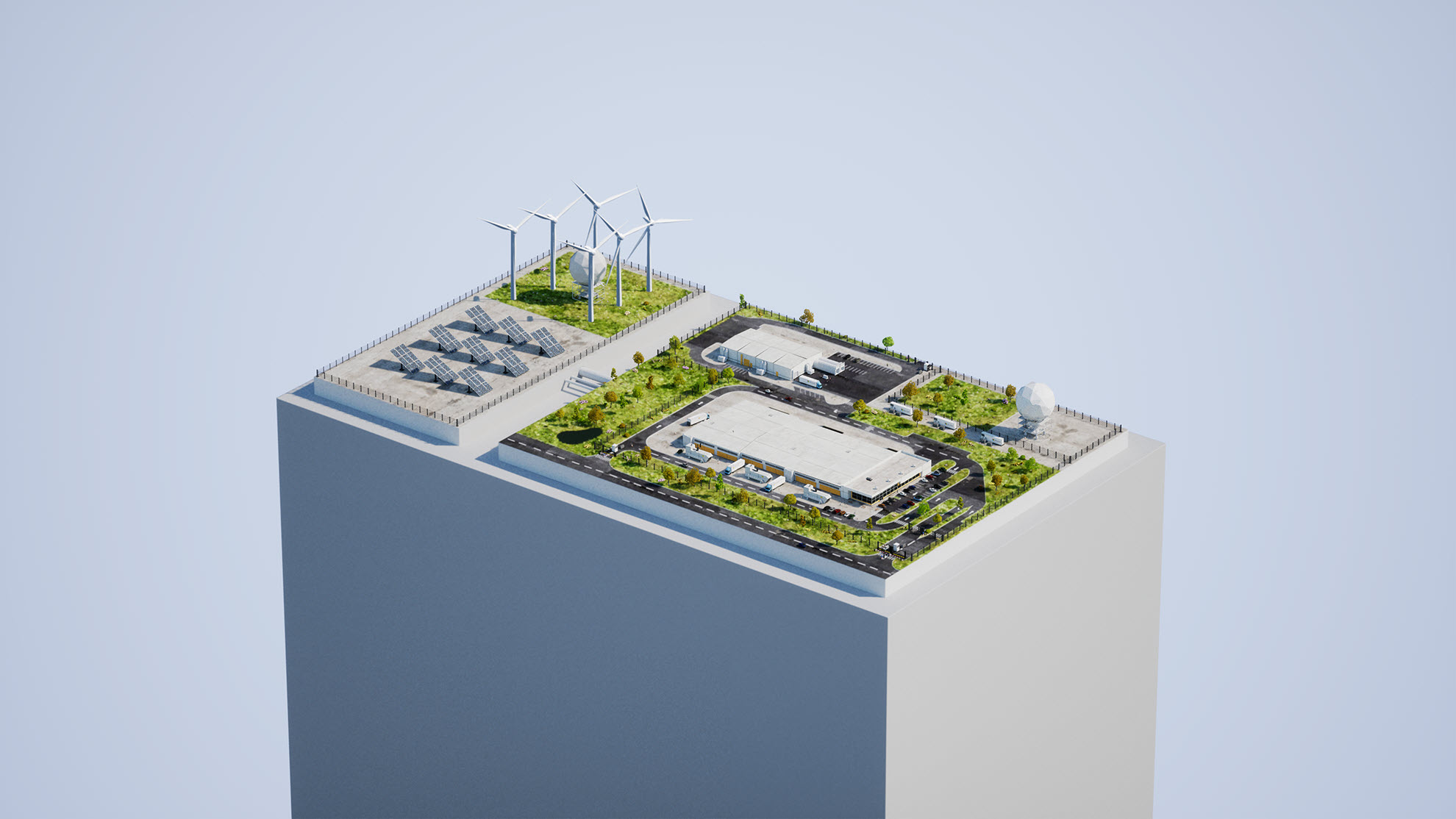

The virtual datacenter experience, which the company unveiled today, is an immersive digital tour of a typical Microsoft datacenter. Visitors can take the tour via a personal computer or mobile device.

“It makes the cloud real for people and less high-tech and highfalutin,” said Noelle Walsh, a Microsoft corporate vice president who leads the team that builds and operates the company’s cloud infrastructure.

Datacenters, she added, are just like houses – buildings with electrical and mechanical equipment. Granted, the Microsoft Cloud is on a different scale than any house and operates at a much higher degree of reliability. Currently, the company operates more than 200 datacenters, and that number continues to grow. To date, the company’s operating and planned datacenter footprint spans 34 countries around the world all networked together via more than 165,000 miles of subsea, terrestrial and metro optical fiber.

What’s more, Microsoft is slated to add datacenters in at least 10 more countries this year, and the company is on pace to build between 50 and 100 new datacenters each year for the foreseeable future, Walsh said.

Visitors who click into the virtual datacenter experience learn about the infrastructure required to build datacenters, the renewable energy that powers them and the hardware and software that keeps data secure. The final stop on the tour offers a glimpse of the future, including servers kept cool in tanks of boiling liquid and modular datacenters deployed on the seafloor in submarine-like tubes.

“Datacenters look way cooler in movies and commercials,” cautioned Brian Janous, general manager of Microsoft’s team for datacenter energy strategy. “There’re not a lot of bells and whistles. But that is really evidence of the fact that they’re optimized for efficiency. There’s a lot that’s not readily visible.”

Physical and cyber security

Most Microsoft datacenters are warehouse-sized, windowless amalgams of concrete, steel, copper and fiber surrounded by a fence. “If you were to drive by a datacenter, you probably wouldn’t know that that was a datacenter,” Walsh said, noting that the non-descript nature is by design. “We generally don’t publicize where we are.”

The virtual datacenter experience is a rare invitation for the driver to tap the brakes, pause and look around.

The high-security perimeter fence is one of many layers of physical security in place to control access into and out of the datacenter, noted Mark Russinovich, a Microsoft technical fellow and Azure’s chief technology officer. There are also security cameras and a guardhouse. Upon entry, additional physical security measures include a check-in station where visitors show their credentials and a one-way door that prevents people from taking anything unauthorized in or out of the datacenter.

“You won’t see the backend monitoring of all those systems,” Russinovich said. “There’s automated monitoring looking for anomalies of who’s given access, automated monitoring of the video feeds.”

From the outside, visitors will also see an array of electrical equipment required to power the datacenter. This includes at least two power lines fed in from the electric power grid for redundancy in case one line goes down, as well as onsite backup generators to power the servers in case of a power grid outage or other type of disruption. Today, most of Microsoft’s backup generators are diesel powered; longer term the company plans to transition to low-carbon fuels, batteries or hydrogen fuel cells.

Microsoft is committed to powering all its datacenters and operations with the equivalent of 100% renewable sources of energy by 2025. The virtual datacenter experience shows wind turbines and solar panels as examples of the type of renewable energy the company is purchasing to reach that goal, though wind turbines and solar panels are rarely seen immediately adjacent to, or on top of, the company’s datacenters.

“One thing we get questioned about all the time is, ‘Why don’t you put solar panels on your datacenters?’” Janous said. The answer, he said, is “because it would really just be window dressing on what is a real problem we’re trying to solve, which is to accelerate the decarbonization of the electric grid and, frankly, putting solar panels on our datacenter would only be a drop in the bucket.”

While the land footprint of each datacenter is roughly the equivalent of a big box department store, which is the type of business that could generate enough power with rooftop solar panels to meet peak power demand, “almost every square inch of the datacenter is utilized for moving electricity through servers, turning electricity into data. Only 1 to 2% of the energy consumed in that datacenter could be met with a rooftop solar system,” Janous explained.

Instead of rooftop solar panels, Microsoft enters financial agreements with power companies to build wind and solar farms across thousands of acres of land. When possible, the company also elects to build datacenters near hydroelectric dams, which provide a steady stream of green and reliable baseload power for datacenters.

Cool, protected and reliable

All those servers inside the datacenter turning energy into data generate heat as they operate, which requires an engineered cooling system to prevent the servers from malfunctioning. Microsoft datacenters in temperate climates such as the U.S. Pacific Northwest and Northern Europe use outside air for cooling except for the warmest of days. The same is true for hotter and more humid regions of the world, Janous noted.

On the 15% of days when temperature and humidity exceed the threshold for air cooling, Microsoft’s mechanical coolers use water to cool the air via the process of evaporation. As a company, Microsoft is moving toward waterless cooling technologies such as liquid cooling and aims to replenish more water than it consumes by the end of this decade.

Inside the datacenter, rows of server racks are typically laid out in what’s called a hot aisle and cold aisle configuration. T-shirt-weather air is blown into the cold aisle, which is wide enough for technicians to access individual servers for maintenance. As the servers operate, fans suck in air from the cold aisle and blow it over the servers. Hot air exits out the back of the servers, into the hot aisle, and is sucked up and out of the datacenter, where it is cycled through the mechanical coolers.

All the electrical and mechanical infrastructure at Microsoft datacenters keep the company’s more than 4 million servers in datacenters around the world operating with what’s known as “five nines” of reliability, or 99.999% of the time, Walsh noted.

“The server is the precious part,” she said.

Microsoft datacenter server rooms are where the cloud’s computing and storage capacity live. Microsoft has more than 4 million servers in datacenters around the world. Image courtesy of Microsoft.

Just as precious, Russinovich added, is maintaining the security and privacy of the data stored and processed inside the datacenter. Microsoft spends more than $1 billion each year on datacenter security across hardware, software, protocols and personnel, he noted.

“You’ll see best practices from physical access to the datacenter to the handling of media to even the way the data is stored on the devices, because software is encrypting the data before it lands on the devices,” he said.

As servers are upgraded, data is wiped from the old media drives and the drives are sometimes physically shredded to ensure the data is destroyed.

“We’re also encrypting the data so that even if there was an issue with something getting out, it’s still not going to be accessible,” Russinovich added.

Datacenter innovation

To meet sustainability goals on carbon and water while simultaneously keeping up with the demand for faster, more powerful datacenter servers, research and engineering teams across Microsoft have their eyes on the datacenter of the future. In fact, the pace of change over the next five years is likely to eclipse the pace of change over the past 20 years, according to Russinovich.

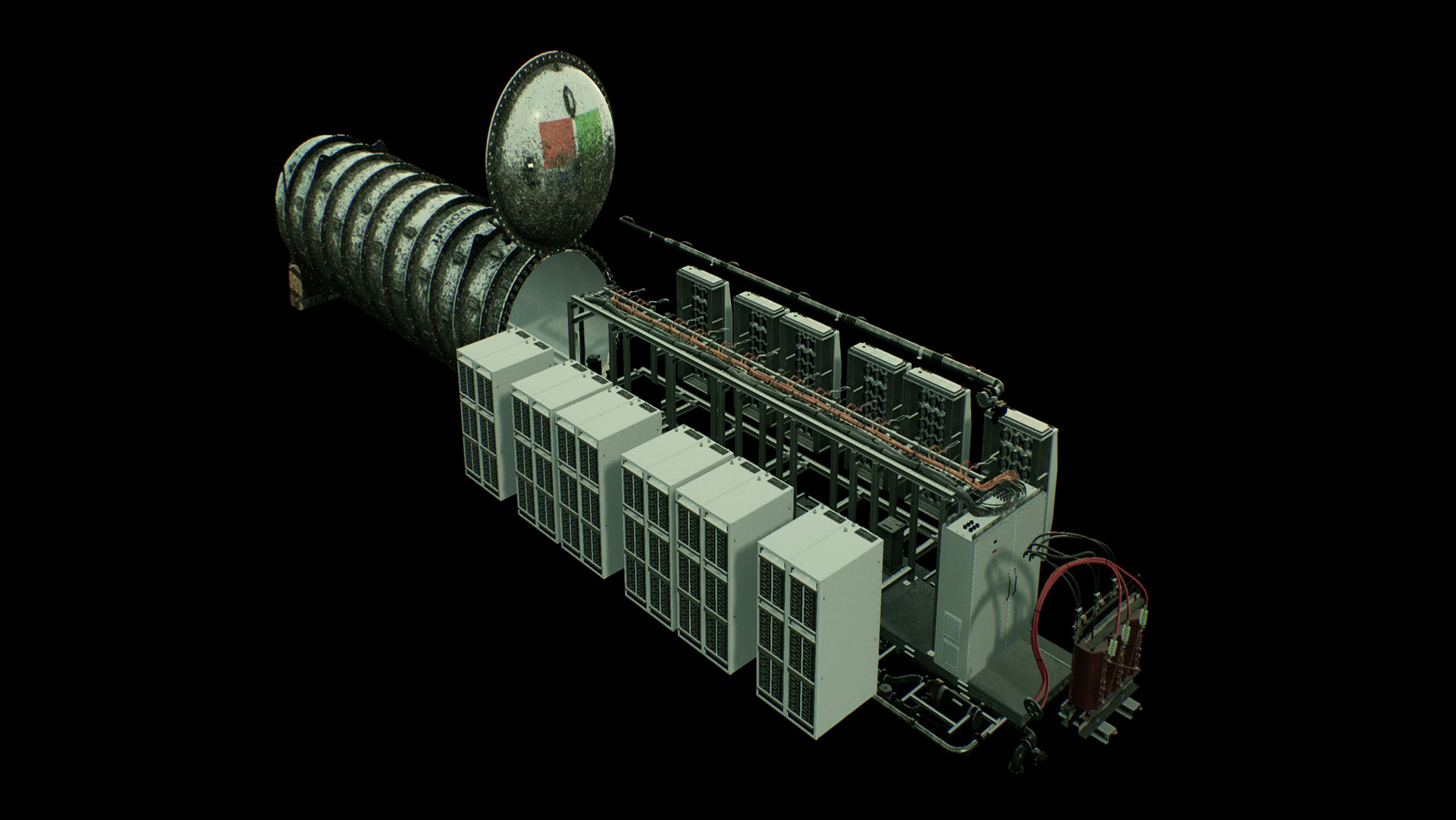

Microsoft’s Project Natick explored the potential to deploy datacenters onto the seafloor in submarine-like tubes. Image courtesy of Microsoft.

For example, more efficient, high-bandwidth networks will enable large-scale AI applications as well as the ability to transfer massive amounts of data, he said.

These fast and efficient datacenters will be enabled by innovations such as the liquid cooling and undersea datacenter concepts presented on the final stop of the virtual datacenter experience. These technologies allow for more densely packed servers and open the door for new software and hardware architectures optimized for low-latency, high-powered AI applications, Walsh noted.

These densely packed servers could also lead to a new breed of datacenters deployed for edge applications. Nevertheless, the hyperscale Microsoft datacenters that the company is building today and planning to build for the foreseeable future will continue to be a prominent part of the mix, Russinovich said.

“The hyperscale regions will probably still remain large just because the cloud demand will continue to grow,” he said.

Related

- Check out the virtual datacenter experience

- Read about Microsoft’s liquid cooling and undersea datacenterprojects

- Learn more about sustainability at Microsoft

- See the Inside a Microsoft datacenterinfographic

Top image: Microsoft’s virtual datacenter experience is an immersive, digital tour of a typical Microsoft datacenter that visitors take via a personal computer or mobile device. Image courtesy of Microsoft.

John Roach writes about Microsoft research and innovation. Follow him on Twitter.